However, it's just a very weak password OpenSesame where I shifted my hands on the keyboard over to the right one letter (which is probably one of say 2^6 ~ 64 common ways to alter typing an easy to remember low-entropy password). If I gave you a password like P[rmDrds,r you might assume I randomly chose 10 characters from a set of 95 printable ASCII characters and to brute-force you would have to go through 95 10 ~ 2 65.7 possibilities and it would have an entropy of 65.7 bits. So if you are asked to estimate an entropy for a password, your task is to assume the model that might have generated that password. You can only assign an entropy to a model of generating passwords.

Now if I hand you a random user's password, technically it's not possible to uniquely assign an entropy to it.

If I randomly generated 128-bit as my random AES-128 key (that I store somewhere), it's easy to see there are 2 128 = 340,282,366,920,938,463,463,374,607,431,768,211,456 possible keys I could have used (2 equally-likely choices for each bit and probabilities multiply). What are the arguments against using H₀(B) = log₂(B) to quantify the size of information needed to defeat the protection system? Again, my apologies for a poorly worded question.Įntropy in physics and in information science is just the logarithm (typically natural log in physics base-2 log in computer science) of the number of equally likely possibilities, because it's generally easier to deal and think about with the logarithm of these exceptionally large number of possibilities than the possibilities directly.

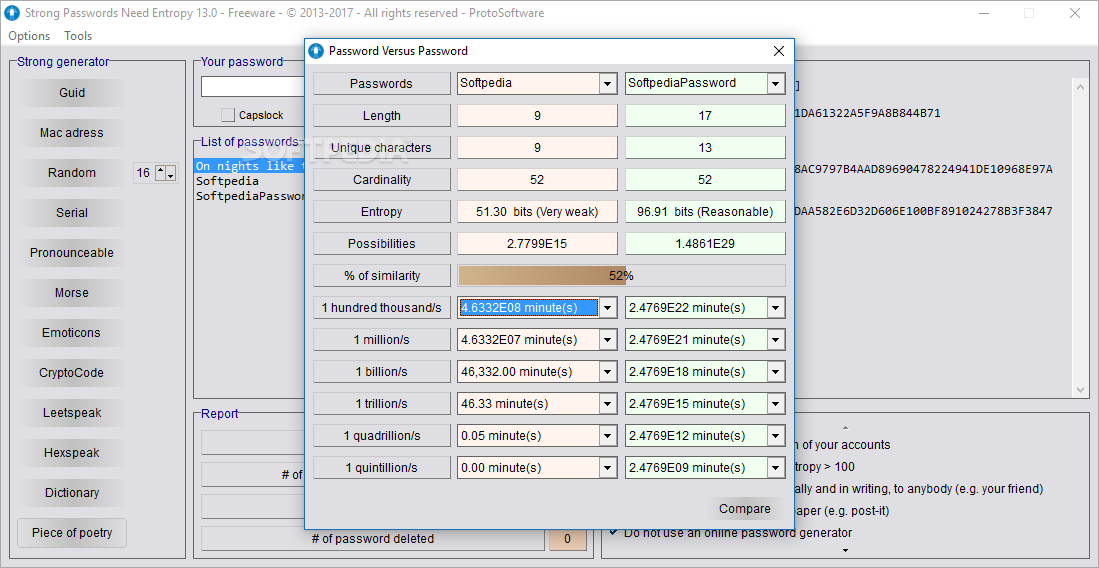

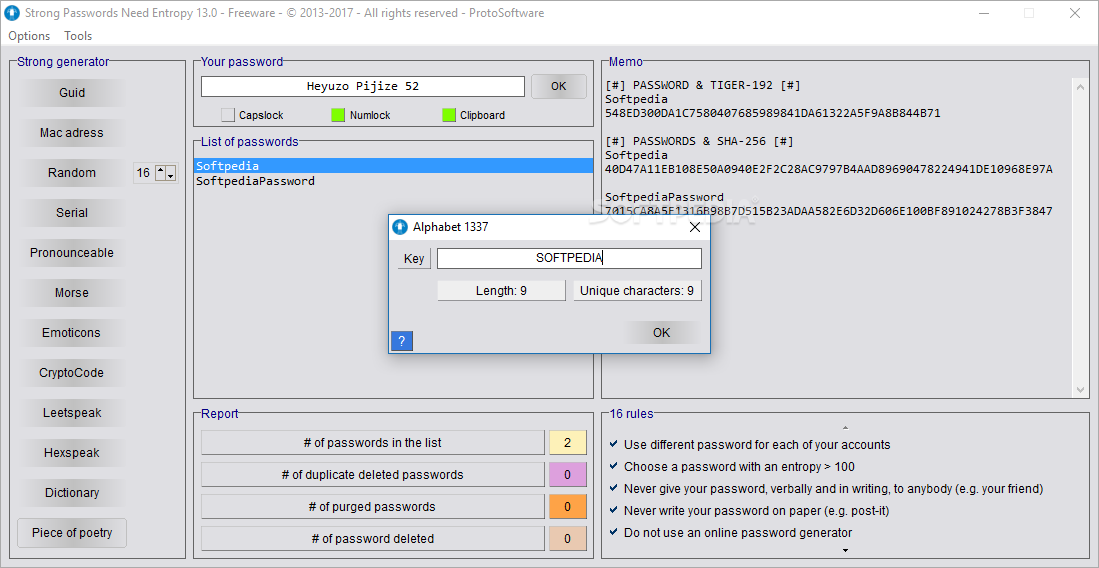

I'm trying to get some insight into situations where the size of A is not so easily quantifiable, but I have some metric that captures the set of information, say 'B'. For a password, the size set A (and hence the size of the information H₀(A) ) can quantified in a straightforward manner, N L. Now let A be the information needed to defeat a protection system on device. Let me try again from a more cyber security perspective: We can define the size of the information H₀(A) in a set A as the number of bits that is necessary to encode each element of A separately, i.e. For a stupidly simple example, if my system requires two passwords, can I just add the entropy of each password?ĮDIT: I've clearly not phrased this question very well. I'd like to understand how the concept of password entropy was developed to understand other applications of this entropy form.ĭoes password entropy satisfy the requirements of other types of entropy: additive, linear, etc. However, password entropy seems to be considerably different (in form) than other types of entropy a simple log₂(D) where D is a difficulty or complexity metric. I also have a reasonable understanding of other types of entropy (e.g. I understand how to calculate password entropy and what the Length and Character values represent.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed